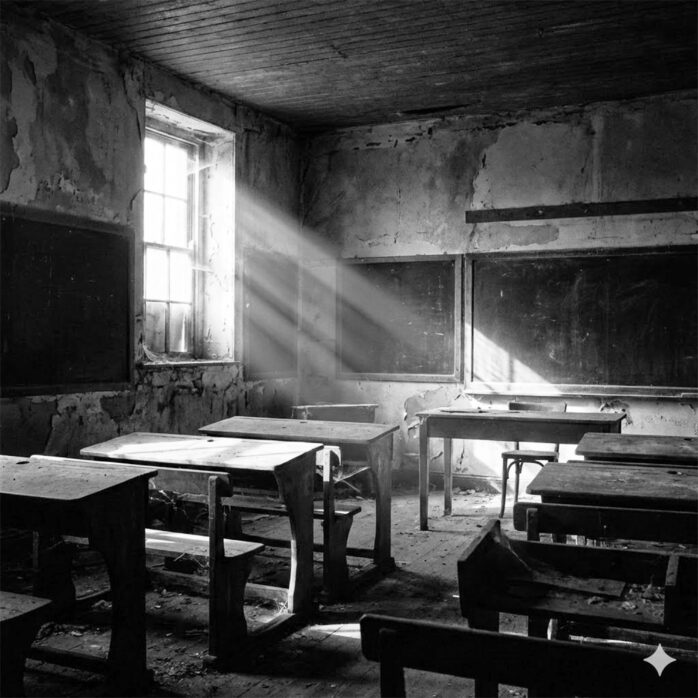

Every new information technology has sparked fresh panic about the future of teaching. Plato warned that writing itself would destroy memory and learning. The printing press set off fears that books would undermine scholarly authority. Thomas Edison said that motion pictures would replace schoolbooks. Radio, television, and personal computers each drew the same anxious forecasts, followed in the 2000s by a wave of predictions that online courses would empty out campuses. None of it happened. Each technology reshaped instruction while leaving the teacher-student relationship intact.

Generative AI has now joined the parade, and once again the loudest voices are asking the wrong question. The issue isn’t whether AI will replace faculty. It’s how instruction must adapt so that the human work stays at the center of teaching.

A good place to start is a recent Forbes interview with Ben Gomes, Google’s Chief Technologist for Learning and Sustainability, in which he made one simple point. The hardest problem in education is motivation. And it’s a problem AI will never solve. “Technology can improve how you learn and the details of it,” Gomes put it, “but the why you learn is a very human thing.”[1] That sentence should sit at the heart of every conversation about reforming college instruction. AI can deliver content, flag errors, generate practice exercises, and personalize feedback faster than any human. None of that addresses the prior question of why a student would bother engaging in the first place.

Gomes grounded the point in a lifetime of watching learners. He observed that high-achieving people almost never credit a book or a tool for unlocking their potential. They credit a person. A teacher who said something, who treated them differently, who made them feel that the work of learning mattered. Once that feeling took hold, the student could run forward on their own, and the tools became accelerators. But the ignition was always human. Decades of educational research echo the finding, with thousands of studies placing teacher-student relationships and instructor clarity among the strongest predictors of learning, well ahead of any technology.[2] If motivation comes from relationships rather than from content delivery, then any reform that pushes instructors further from their students is moving the wrong direction.

Continue reading “Flipping the Script: Using AI to Motivate and Inspire”

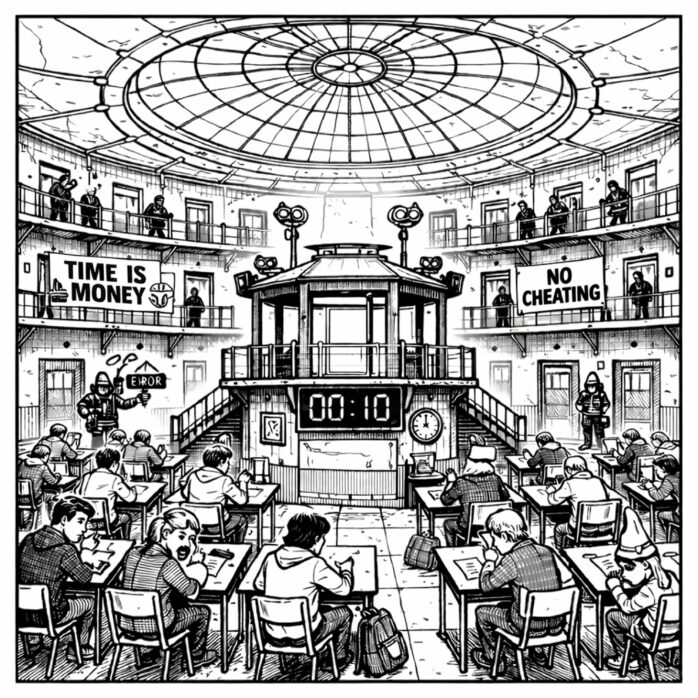

But once activity is translated into data, the data begin to influence the behavior they claim to measure. A brief pause can register as disengagement. Rapid typing can signal focus. Students eventually realize that unseen systems are interpreting their actions and may begin performing for algorithmic approval instead of thinking for themselves. What used to be an exchange between learner and teacher becomes a loop between student and machine. This shift matters because effort is no longer understood through experience but through metrics. In a traditional classroom, effort lived in rereading, revising, and wrestling with ideas. In digital spaces, it gets recorded as keystrokes, session length, and completion rates. These numbers are useful but incomplete. They capture what is visible and overlook what is internal. Confusion, insight, doubt, and breakthrough moments rarely leave a trace.

But once activity is translated into data, the data begin to influence the behavior they claim to measure. A brief pause can register as disengagement. Rapid typing can signal focus. Students eventually realize that unseen systems are interpreting their actions and may begin performing for algorithmic approval instead of thinking for themselves. What used to be an exchange between learner and teacher becomes a loop between student and machine. This shift matters because effort is no longer understood through experience but through metrics. In a traditional classroom, effort lived in rereading, revising, and wrestling with ideas. In digital spaces, it gets recorded as keystrokes, session length, and completion rates. These numbers are useful but incomplete. They capture what is visible and overlook what is internal. Confusion, insight, doubt, and breakthrough moments rarely leave a trace.